Sinapsis TRIpartita is a multidisciplinary performance that combines contemporary dance, electroacoustic music and real-time visuals. The performance has one interpreter, which her body language is transformed into digital information by the use of an infrared sensor, the Microsoft Kinect. The sensor obtains the skeleton position relative in space, furthermore the information is sent to the audio and visual platforms, which is processed in real-time. The live audio was developed using SuperCollider, and for the visual in Cider/C++ and several computer vision algorithms(openCV). We develop a single interface where all the incoming information from the sensor and audio analysis is sent in real-time via OSC to the live visuals. For the audio analysis, we used the MIDetectorOSC, a supercollider library developed by our group, which obtains musical information in real-time and sends it via OSC Messages to other applications.

Video:

SINAPSIS TRIpartita from Emmanuel Ontiveros on Vimeo.

I developed the interaction systems that connected all the components visual, audio and sensor. If one of this elements fails in the performance, the other elements cannot perform, they are mutually connected.

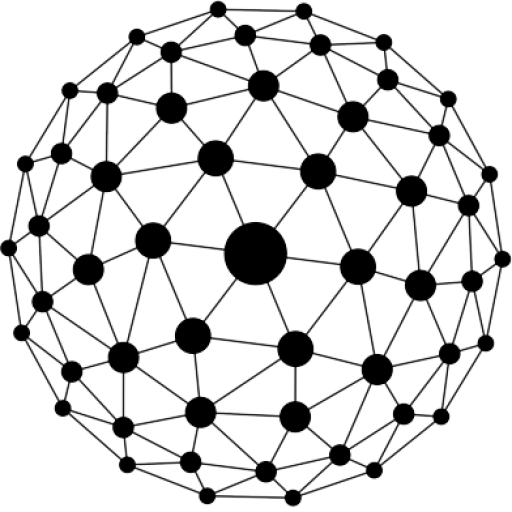

The visual are made using the open source c++ frameworks Cinder, some of the blocks that were use are MSAFluids, OSC, MIDI and the kinect openNI

Sinapsis in the LPM, CENART(The Nacional Center of Arts) of Mexico City 2012.

Sinapsis in the Ceremonia Festival 2013

TagDF presente en el Festival Ceremonia from TagDF on Vimeo.

Director and music:

Emmanuel Ontiveros.

Choreography and ballerina:

Ambar Luna Quintanar

Programming and video:

Thomas Sanchez Lengeling